|

Listen to this story

|

SambaNova Systems has achieved a new performance milestone, setting a world speed record with Meta’s Llama 3.1 405B model, processing 114 tokens per second. The performance, verified by Artificial Analysis, outpaces other providers by over four times, positioning SambaNova as a leader in AI speed and efficiency.

“I’ve been playing with SambaNova Systems‘s API serving fast Llama 3.1 405B tokens. Really cool to see the leading model running at speed. Congrats to Samba Nova for hitting a 114 tokens/sec speed record,” said DeepLearning.ai founder Andrew Ng.

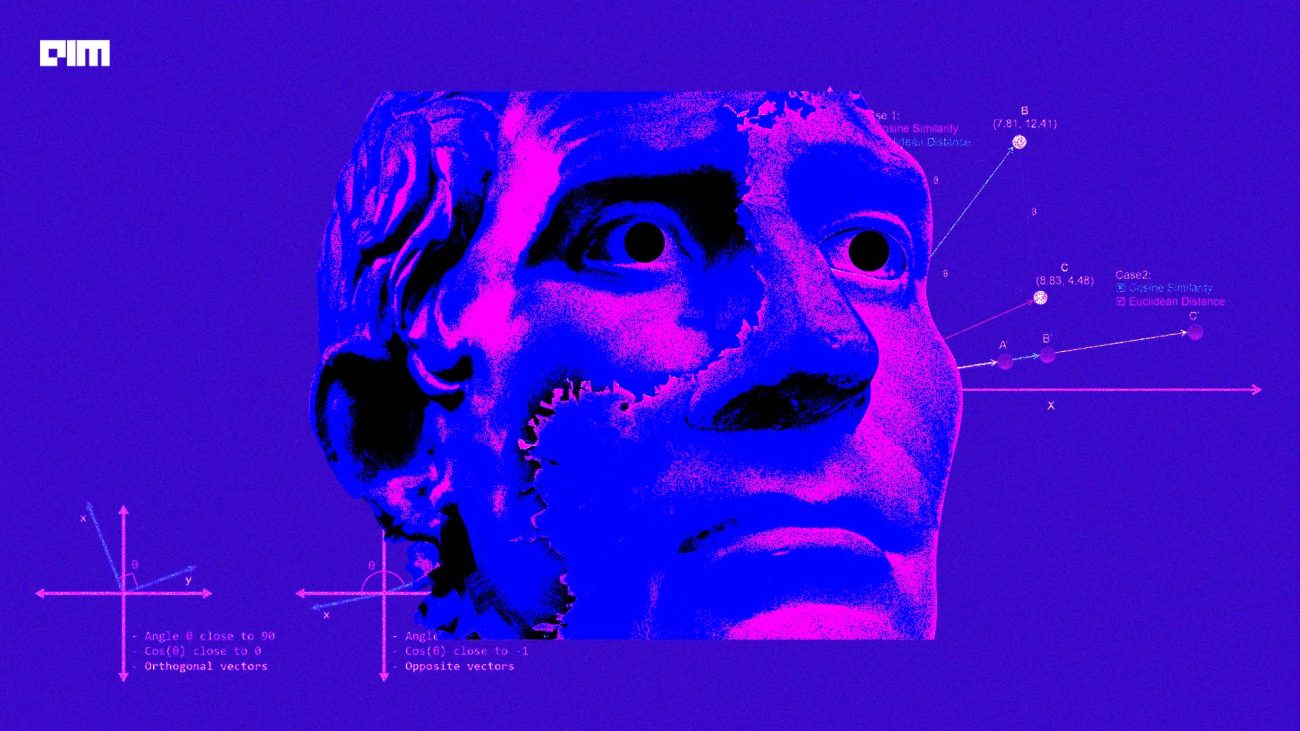

The benchmark was set using a single 16-socket node, operating with full 16-bit precision on SambaNova’s custom RDU chips. This advancement addresses the challenge of balancing quality and speed in large models like Llama 3.1 405B, enabling the deployment of the model in more speed-sensitive applications, such as customer support and AI agents.

George Cameron, Co-Founder of Artificial Analysis, confirmed the record, saying that SambaNova’s platform reduces the trade-off between model size and operational speed, making it viable for real-time applications.

SambaNova’s fourth-generation RDU chip, the SN40L, plays a critical role in this achievement, facilitating real-time processing that opens up new enterprise use cases. These include intelligent document processing, real-time AI copilots, and explainable AI, all of which benefit from the platform’s speed.

The company is offering a demo of the Llama 3.1 405B model on its website and is inviting developers to access its APIs for building enterprise-level AI applications.

SambaNova Systems is a technology company specializing in artificial intelligence (AI) hardware and software solutions. Founded in 2017 in Palo Alto, California, by Kunle Olukotun, Rodrigo Liang, and Christopher Ré, the company provides purpose-built solutions for deep learning and AI applications.

The company’s technology is built around the SN40L chip, which features a reconfigurable dataflow architecture. This design optimizes data movement and reduces latency, making it highly efficient for AI tasks compared to traditional GPU-based systems.